Home

1

1

2

2

3

3

What MAC Does

Constitutional AI steers LLM behavior through natural-language rules, but writing those rules by hand is hard, and getting them right is harder. Existing prompt optimizers automate this but fall short: they need many labeled examples, produce opaque token-level edits, and hit diminishing returns as prompts grow.

MAC fixes this. Instead of editing a flat prompt string, MAC optimizes over a structured set of rules -- a constitution -- using a network of four specialized agents. Given a training set and a scoring function, it runs a training loop: the agents propose new rules, test them on a held-out batch, and keep only the rules that improve the score. The result is a plain-English constitution you can read, audit, hand-edit, and transfer to a different model without retraining.

Real rules MAC learned for PII tagging

Legal: "Mark as private specific dates when they appear in the context of personal events or actions, such as births, deaths, or significant life events. Do not mark general references or narrative text." Examples: mark "1975", "22 August 2003"; do not mark "on a day in June".

Healthcare: "Mark terms such as heart failure subtypes (e.g., diastolic heart failure, systolic heart failure) when explicitly mentioned in a patient's medical history as private. Do not mark generic medical conditions without an explicit subtype."

Finance: "Mark as private any phrase indicating a specific financial timeframe (e.g., FY2022, YTD FY2021) when it appears in direct association with identifiable information. Do not mark standalone labels without specific identifiers."

Explainable Structured Auditable Transferable Sample-efficient

- Explainable -- every rule is natural language you can read, audit, and hand-edit

- Structured -- optimizes over a set of rules, not a monolithic prompt blob; rules are added, edited, or removed independently

- Auditable -- each proposed rule is validated against a held-out batch before acceptance; you see exactly what changed and why

- Transferable -- a constitution learned on one model works on another without retraining

- Sample-efficient -- converges with far fewer labeled examples than GEPA or MIPRO

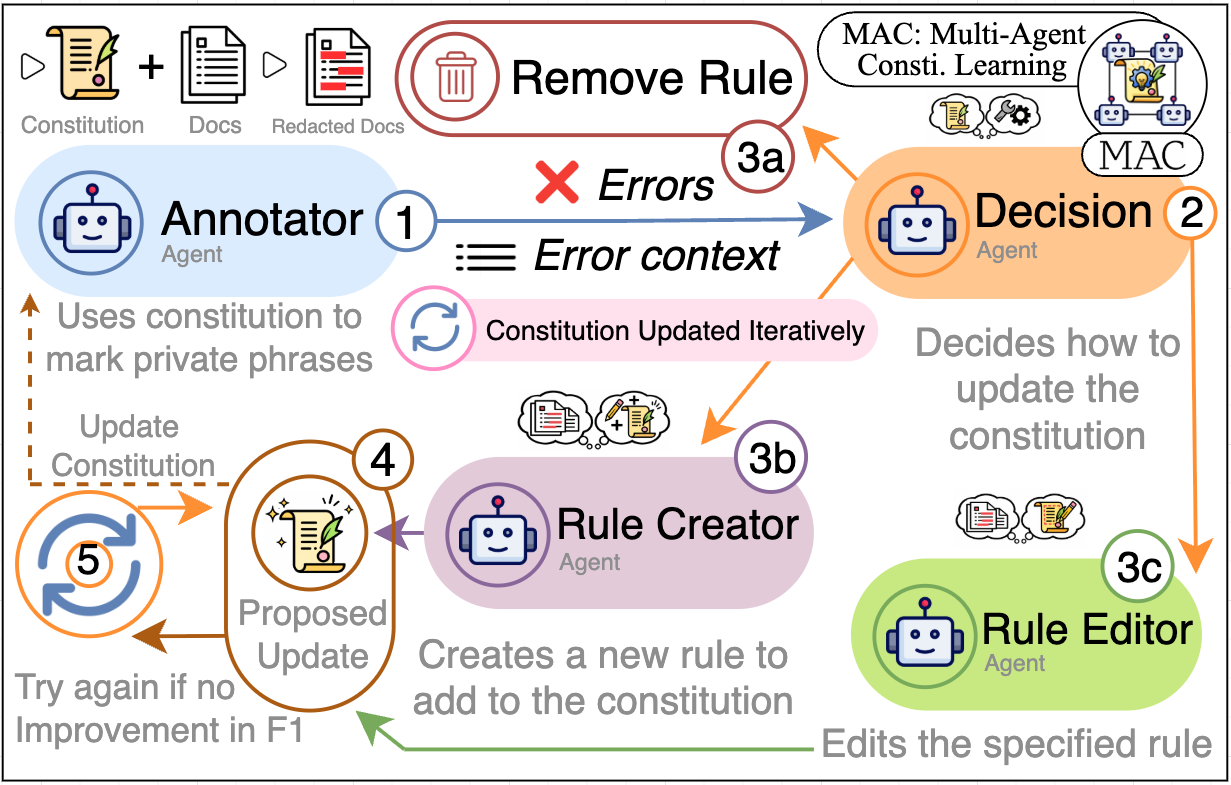

How It Works

Four agents coordinate in a closed loop each epoch:

- Annotator runs the current constitution on a training batch and scores each example

- Decision Agent looks at the errors and decides whether to add a new rule or edit an existing one

- Rule Proposer drafts a candidate rule targeting the observed error pattern

- Rule Editor refines existing rules to remove contradictions or sharpen specificity

Every proposed change is tested on a held-out validation batch. If the score goes up, the rule is accepted and the constitution advances to the next version. If not, the change is discarded. This means the constitution only ever improves -- no regression.

The meta-model and task adaptation

MAC works on any task because of a fifth component: a meta-model that runs once before training starts. It reads your task description, inspects real data samples, and rewrites the four agent prompts to be domain-specific -- replacing generic placeholders with actual instructions about your task format, output schema, and evaluation criteria. This is a structural rewrite, not a word swap. After adaptation, all four agents speak the language of your task. You can watch this step live in the terminal:

1/4 ✓ annotator adapted (12s)

2/4 ✓ decision adapted (18s)

3/4 ✓ rule_proposer adapted (24s)

4/4 ✓ rule_editor adapted (31s)

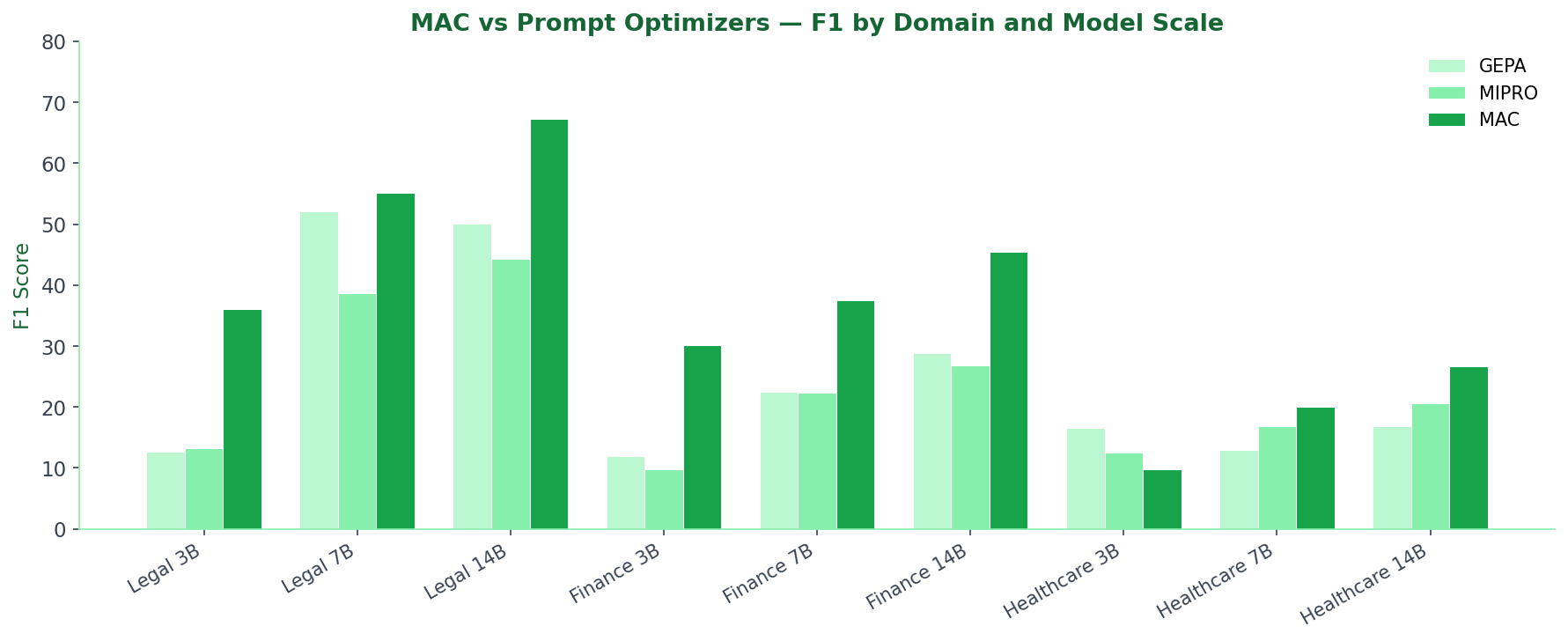

MAC vs Prompt Optimizers

All three optimizers were benchmarked on domain-specific PII tagging across Legal, Finance, and Healthcare documents. The worker model was Qwen2.5-Instruct at 3B, 7B, and 14B parameter scales. MAC ran in Auto-Adapt mode with cloud MAC agents (gpt-4o). GEPA and MIPRO ran with their default configurations and the same underlying model. Scores are F1 on held-out test sets.

| Dataset | Method | 3B | 7B | 14B |

|---|---|---|---|---|

| Legal | GEPA | 12.7 | 52.1 | 50.1 |

| Legal | MIPRO | 13.2 | 38.6 | 44.3 |

| Legal | MAC | 36.0 | 55.1 | 67.3 |

| Finance | GEPA | 11.9 | 22.5 | 28.8 |

| Finance | MIPRO | 9.8 | 22.3 | 26.8 |

| Finance | MAC | 30.1 | 37.5 | 45.5 |

| Healthcare | GEPA | 16.5 | 12.9 | 16.8 |

| Healthcare | MIPRO | 12.5 | 16.8 | 20.6 |

| Healthcare | MAC | 9.7 | 20.1 | 26.7 |

MAC wins 8 of 9 configurations. The largest single gain is Legal at 3B scale: MAC scores 36.0 vs the next-best 13.2 -- a +174% improvement. The only configuration where a baseline wins is Healthcare 3B, where GEPA edges MAC by 6.8 F1 points.

MAC vs Pretrained Taggers (at 14B scale)

These comparisons are against purpose-built PII taggers -- Presidio (a rule-based system from Microsoft) and GLiNER (a span-extraction model fine-tuned for NER). MAC uses a 14B general-purpose Qwen2.5 model with a learned constitution; no fine-tuning on PII data at all.

| Domain | MAC | Best Baseline | Gain |

|---|---|---|---|

| Legal | 67.3 | 57.3 (Presidio) | +17% |

| Finance | 45.5 | 44.7 (Presidio) | +2% |

| Healthcare | 26.7 | 12.0 (GLiNER) | +123% |

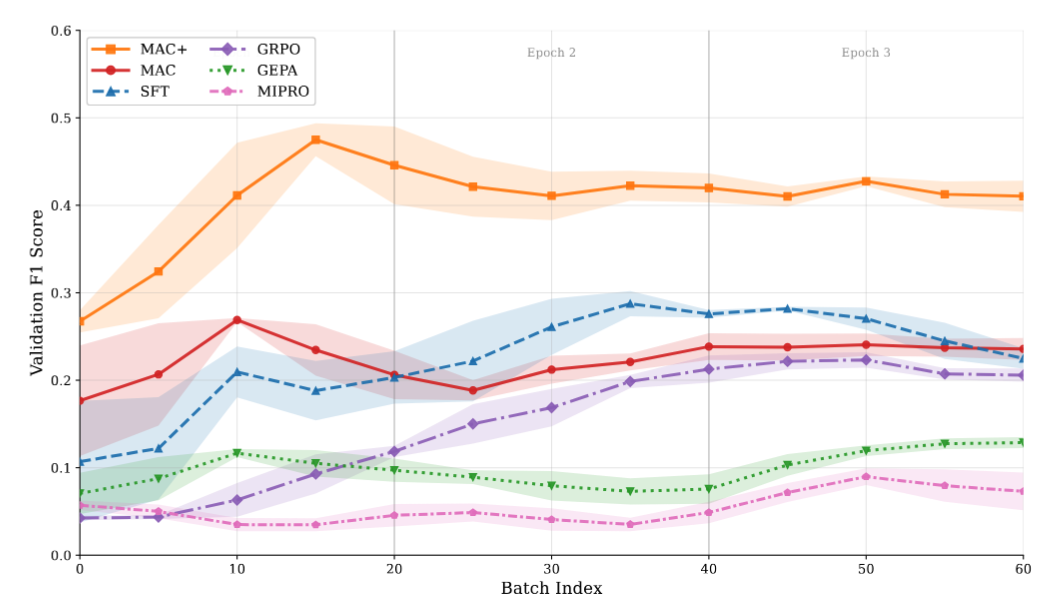

Training Dynamics

Validation F1 over training batches on 3B Qwen2.5 models (ECHR legal dataset). MAC's score climbs steadily across batches while GEPA and MIPRO plateau or fluctuate. The gap widens as the constitution accumulates more rules.

Results — General-Purpose Benchmarks

MAC is task-agnostic. Below are results across three standard benchmarks ordered by largest gain: fact verification (HoVer), multi-hop QA (HotpotQA), and grade-school math (GSM8K).

Reading the tables

Each row is one (worker, MAC agents, style) configuration:

- Worker — the model being optimised; annotates every example in every batch

- MAC Agents — the decision, rule-proposer, and rule-editor agents that learn the constitution

- Meta-Model — adapts all four agent prompts to your task before training starts; identical to MAC Agents in all runs below

- Style — adapt: MAC builds the initial prompt from scratch via the meta-model; custom: user supplies a prompt with

{{CONSTITUTION_BLOCK}}

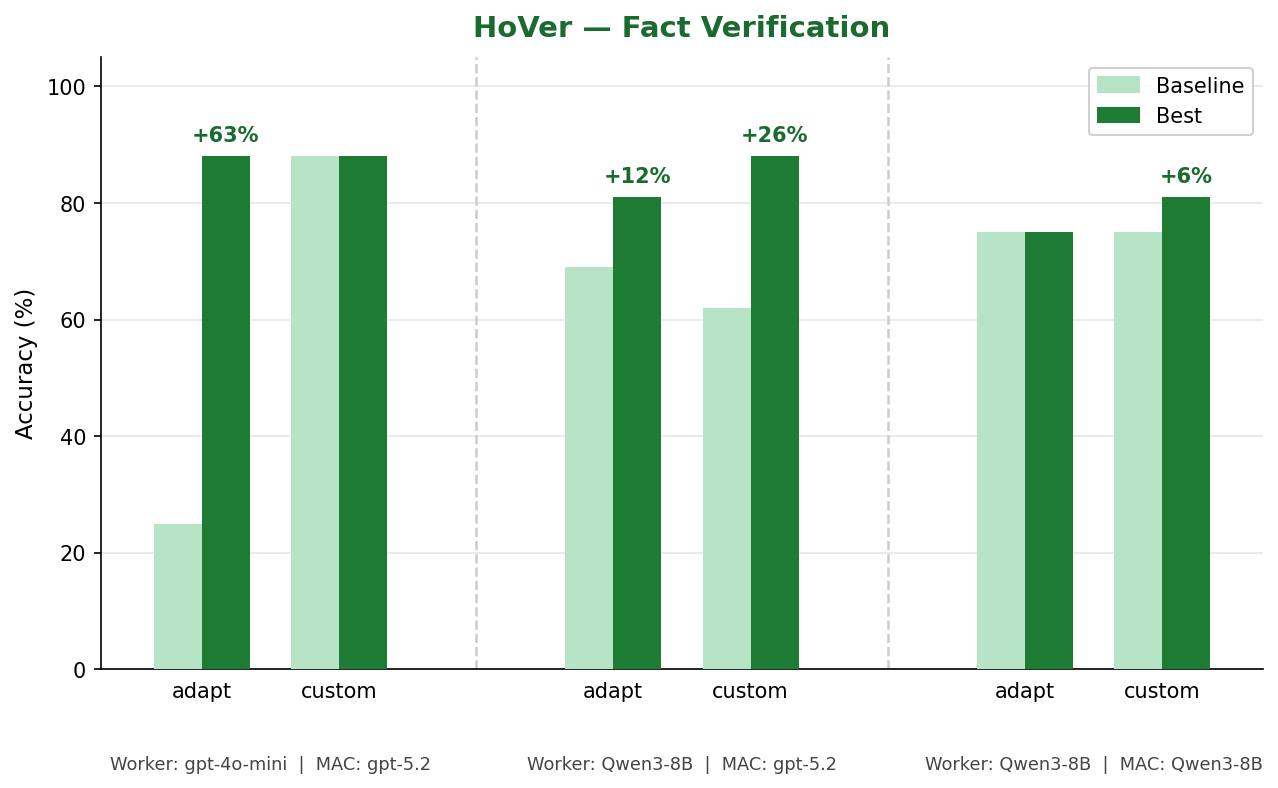

HoVer — Fact Verification

HoVer asks the model to verify multi-hop factual claims against Wikipedia evidence — a binary yes/no task. The biggest gain (+63%) comes from gpt-4o-mini worker with gpt-5.2 MAC agents in Auto-Adapt mode. Even with a fully local Qwen3-8B worker paired with gpt-5.2 MAC agents, MAC adds +26% in Custom Prompt mode.

| Worker | MAC Agents | Meta-Model | Style | Baseline | Best | Delta |

|---|---|---|---|---|---|---|

| gpt-4o-mini | gpt-5.2 | gpt-5.2 | adapt | 25% | 88% | +63% |

| gpt-4o-mini | gpt-5.2 | gpt-5.2 | custom | 88% | 88% | 0% |

| Qwen3-8B | gpt-5.2 | gpt-5.2 | adapt | 69% | 81% | +12% |

| Qwen3-8B | gpt-5.2 | gpt-5.2 | custom | 62% | 88% | +26% |

| Qwen3-8B | Qwen3-8B | Qwen3-8B | adapt | 75% | 75% | 0% |

| Qwen3-8B | Qwen3-8B | Qwen3-8B | custom | 75% | 81% | +6% |

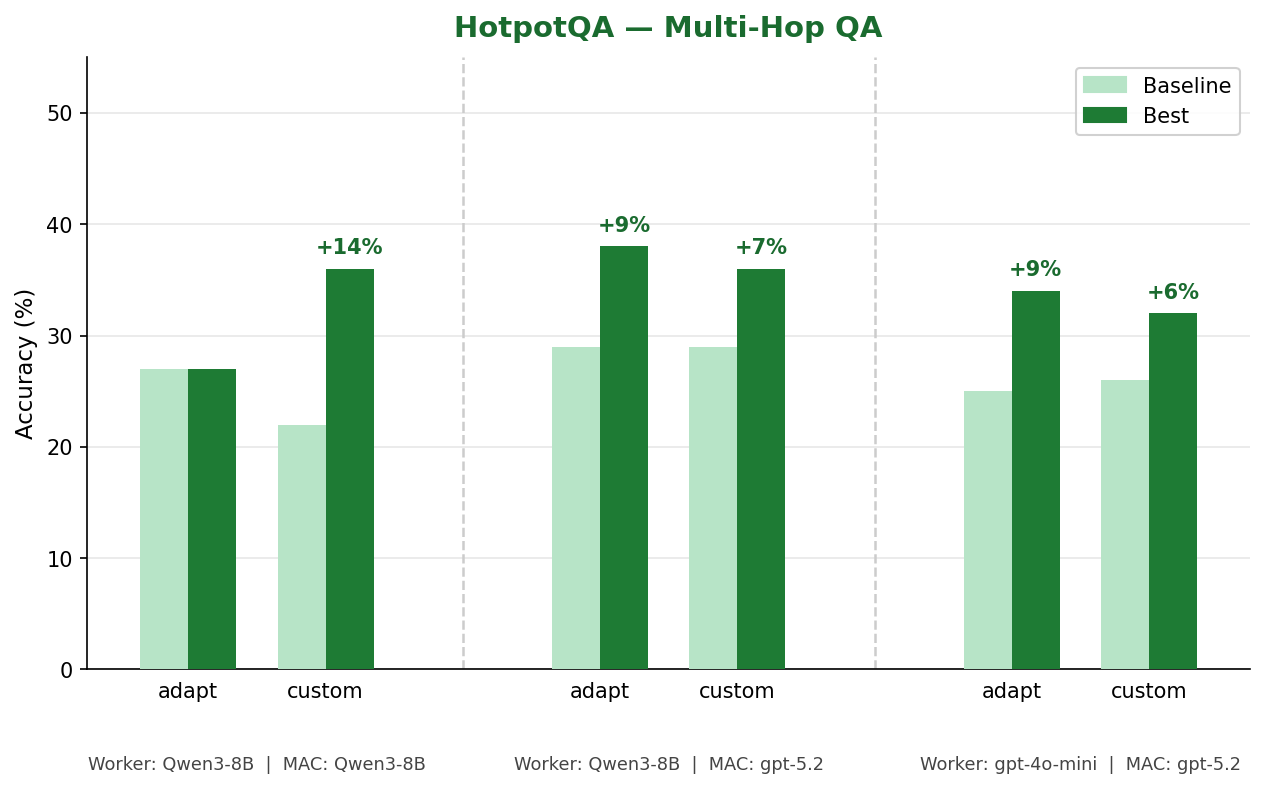

HotpotQA — Multi-Hop QA

HotpotQA requires chaining facts across two Wikipedia documents to answer a question. Baselines sit between 22% and 29%. MAC improves across every configuration except one, with the best gain of +14% when Qwen3-8B handles all three roles. The fully-local setup achieves the largest gain here because the local model has a lower baseline and more structured error patterns for the rule-proposer to target.

| Worker | MAC Agents | Meta-Model | Style | Baseline | Best | Delta |

|---|---|---|---|---|---|---|

| Qwen3-8B | Qwen3-8B | Qwen3-8B | adapt | 27% | 27% | 0% |

| Qwen3-8B | Qwen3-8B | Qwen3-8B | custom | 22% | 36% | +14% |

| Qwen3-8B | gpt-5.2 | gpt-5.2 | adapt | 29% | 38% | +9% |

| Qwen3-8B | gpt-5.2 | gpt-5.2 | custom | 29% | 36% | +7% |

| gpt-4o-mini | gpt-5.2 | gpt-5.2 | adapt | 25% | 34% | +9% |

| gpt-4o-mini | gpt-5.2 | gpt-5.2 | custom | 26% | 32% | +6% |

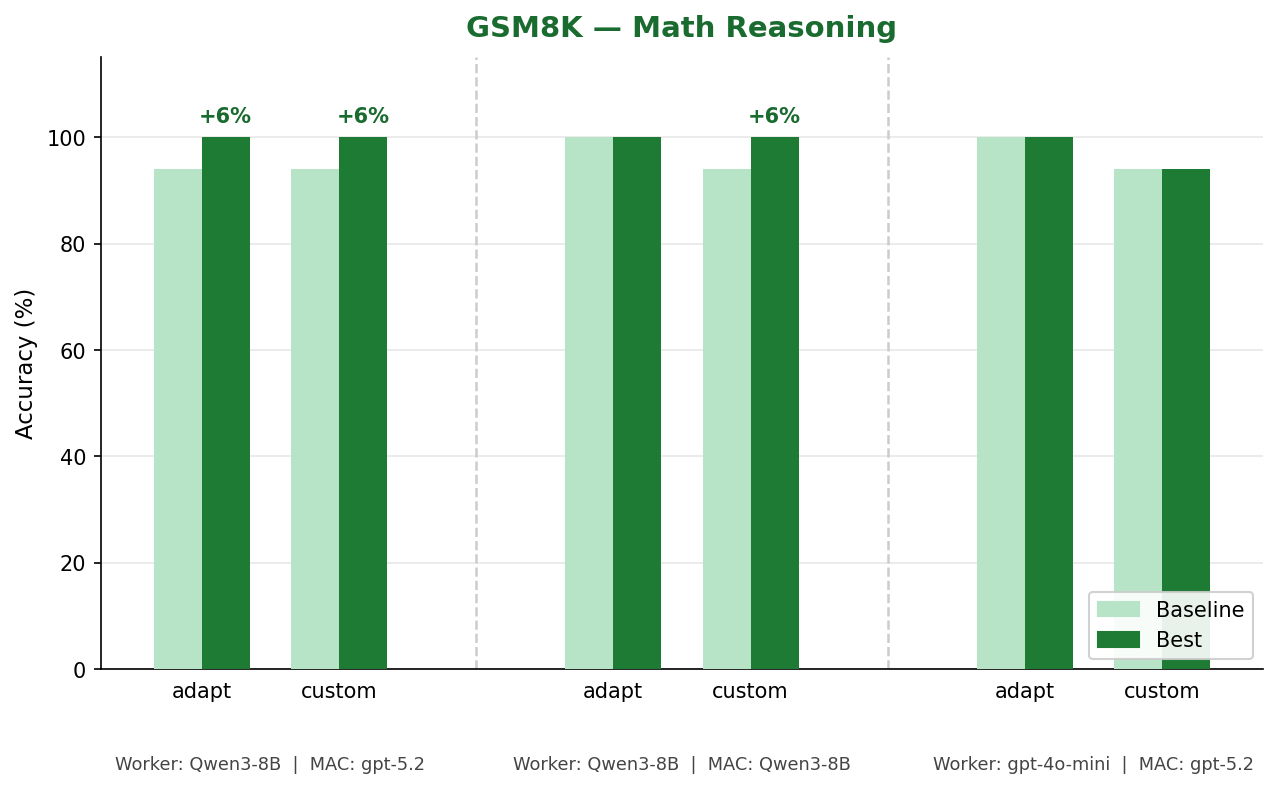

GSM8K — Math Reasoning

GSM8K tests multi-step arithmetic word problems. The Qwen3-8B baseline already scores 94% zero-shot — a high ceiling. Despite this, MAC pushes it to 100% in both modes when paired with gpt-5.2 MAC agents. The fully-local Qwen3-8B setup also reaches 100% in Custom Prompt mode.

| Worker | MAC Agents | Meta-Model | Style | Baseline | Best | Delta |

|---|---|---|---|---|---|---|

| Qwen3-8B | gpt-5.2 | gpt-5.2 | adapt | 94% | 100% | +6% |

| Qwen3-8B | gpt-5.2 | gpt-5.2 | custom | 94% | 100% | +6% |

| Qwen3-8B | Qwen3-8B | Qwen3-8B | adapt | 100% | 100% | 0% |

| Qwen3-8B | Qwen3-8B | Qwen3-8B | custom | 94% | 100% | +6% |

| gpt-4o-mini | gpt-5.2 | gpt-5.2 | adapt | 100% | 100% | 0% |

| gpt-4o-mini | gpt-5.2 | gpt-5.2 | custom | 94% | 94% | 0% |

Any Task, Any Model

MAC is not tied to a single domain or model family. Give it a task_description, a few labeled examples, and a scoring function, and it learns a constitution for any task you can evaluate -- classification, extraction, math, QA, tool calling, and more.

Under the hood, MAC uses a three-tier model setup:

- Tier 1 -- Worker: annotates examples using the current constitution. Can be a cheap local model (Qwen3-8B on vLLM) or a cloud API model (gpt-4o-mini). Runs on every example in every batch, so cost matters here.

- Tier 2 -- MAC agents: the four-agent network that proposes, edits, and validates rules. Should be a strong model (gpt-4o or equivalent). Runs far less frequently than the worker.

- Tier 3 -- Adapt model: rewrites agent prompts before training starts. Defaults to the same model as Tier 2 if not set separately.

compiler = MAC(

model="Qwen/Qwen3-8B", # Tier 1: worker (cheap / local)

base_url="http://localhost:8000/v1", # vLLM server at any port

mac_model="gpt-4o", # Tier 2: MAC agents (strong)

# adapt_model="gpt-4o", # Tier 3: defaults to mac_model

task_description="Solve AIME competition math problems.",

rule_type="math reasoning rules",

)

See Model Configuration for the full fallback cascade, vLLM port configuration, and provider examples.

Citation

@article{thareja2025mac,

title={MAC: Multi-Agent Constitution Learning for Generalizable Text Annotation},

author={Thareja, Rushil},

year={2025}

}

License

MAC is released under a dual license:

- Non-commercial use (academic research, education, personal projects): free

- Commercial use: requires a separate license. Contact

rushil.thareja@mbzuai.ac.ae